Are so-called ‘alternative’ metrics documenting attention to outputs from publishers, access types, funders, insitutitons and countries usually invisible via traditional citation metrics? Another way to put it is, can altmetrics contribute to higher visibility of outputs usually excluded from mainstream metrication?

In this blog post I share some data resulting from text analysis I conducted on some of the metadata included in the complete Altmetric 2018 raw dataset.

I used Voyant Tools and OpenRefine in order to highlight which are the dominant title keywords, publishers, subjects, access types, funders and authors’ country affiliations.

The Altmetric Top 100 is an annual list of the research that has received most attention online on the platforms / services that Altmetric monitors each year. Altmetric has released an annual Top 100 list since 2013.

Previously I have blogged here about the Altmetric Top 100 since 2014. You can read more about Altmetric and the list on the Altmetric Top 100 site here.

Over time Altmetric has enriched the metadata they shared, also making the raw data available openly on Fighsare. This is very welcome as in the past we had to request the data directly and or do our analysis of the data to detect, for example, outputs’ access type, subjects, funders or institutional and country affiliations. This essential information is now provided in the dataset they share.

You can verify some of these counts by comparing them with the counts offered by Altmetric through their Top 100 2018 interface. The raw dataset includes 212 outputs. Please note metadata count totals do not always sum 212 as beyond the 100 presence of metadata is variable in the raw dataset.

Usual limitations apply: raw data may need refining and deduplication, and counts may have been affected by disiambiguated metadata (e.g. randomised vs randomized) in the original dataset. All counts require further discussion, which -should I find time- I could add in the future.

‘Cirrus’ Cloud of Top 100 Keywords in 212 Output Titles

Top 100 Keywords in 212 Output Titles

| Term | Count |

| global | 14 |

| study | 14 |

| association | 13 |

| human | 13 |

| mortality | 13 |

| adults | 11 |

| analysis | 11 |

| health | 11 |

| cancer | 10 |

| disease | 9 |

| effect | 8 |

| states | 8 |

| gender | 7 |

| impact | 7 |

| risk | 7 |

| trial | 7 |

| united | 7 |

| countries | 6 |

| evidence | 6 |

| genome | 6 |

| high | 6 |

| pain | 6 |

| population | 6 |

| science | 6 |

| cardiovascular | 5 |

| climate | 5 |

| cohort | 5 |

| low | 5 |

| million | 5 |

| social | 5 |

| time | 5 |

| activity | 4 |

| alcohol | 4 |

| blood | 4 |

| cause | 4 |

| data | 4 |

| earliest | 4 |

| earth | 4 |

| effects | 4 |

| energy | 4 |

| food | 4 |

| genetic | 4 |

| healthy | 4 |

| income | 4 |

| increase | 4 |

| largest | 4 |

| long | 4 |

| penguin | 4 |

| physical | 4 |

| plastic | 4 |

| prospective | 4 |

| reveals | 4 |

| sea | 4 |

| state | 4 |

| systematic | 4 |

| adolescents | 3 |

| africa | 3 |

| air | 3 |

| american | 3 |

| aspirin | 3 |

| associated | 3 |

| burden | 3 |

| care | 3 |

| cells | 3 |

| cluster | 3 |

| cognitive | 3 |

| complete | 3 |

| consumption | 3 |

| controlled | 3 |

| death | 3 |

| development | 3 |

| driven | 3 |

| early | 3 |

| elderly | 3 |

| emissions | 3 |

| environmental | 3 |

| exercise | 3 |

| gene | 3 |

| heat | 3 |

| ice | 3 |

| influenza | 3 |

| injury | 3 |

| intake | 3 |

| large | 3 |

| levels | 3 |

| life | 3 |

| major | 3 |

| mass | 3 |

| matter | 3 |

| meta | 3 |

| middle | 3 |

| new | 3 |

| patient | 3 |

| patients | 3 |

| randomised | 3 |

| randomized | 3 |

| sleep | 3 |

| suicide | 3 |

| treatment | 3 |

| trends | 3 |

Publishers by Output Count in Raw Dataset (212 Outputs)

| Publisher | Output Count |

| Springer Nature | 52 |

| American Association for the Advancement of Science | 31 |

| Elsevier | 25 |

| American Public Health Association | 16 |

| Massachusetts Medical Society | 15 |

| United States National Academy of Sciences | 15 |

| American Heart Association | 2 |

| BMJ | 2 |

| Public Library of Science | 2 |

| SAGE Publications | 2 |

| Alliance for Academic Internal Medicine | 1 |

| American Economic Association | 1 |

| Canadian Science Publishing | 1 |

| Cold Spring Harbor Laboratory Press | 1 |

| Oxford University Press | 1 |

| Royal Society | 1 |

| Taylor & Francis Group | 1 |

Subjects by Output Count in Raw Dataset (212 Outputs)

| Subject | Output Count |

| Medical & Health Sciences | 44 |

| Earth & Environmental Sciences | 17 |

| Studies in Human Society | 11 |

| Physical Sciences | 9 |

| History & Archaeology | 7 |

| Biological Sciences | 6 |

| Research & Reproducibility | 4 |

| Information & Computer Sciences | 2 |

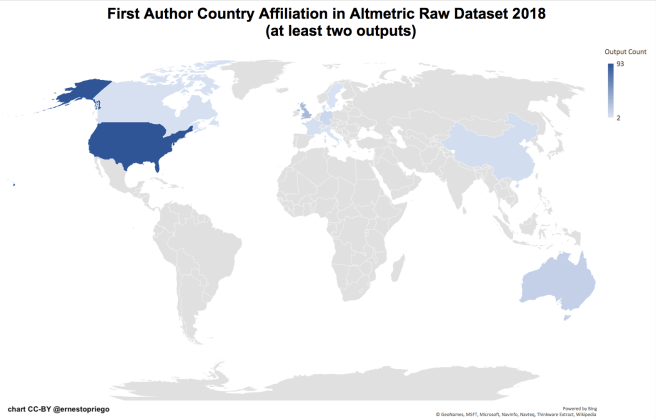

Countries of First Author Affiliation (where there were both single and several country affiliations in output byline) by Output in Raw Dataset (where metadata was available)

| Country of First Author; All | Output Count |

| United States | 93 |

| United Kingdom | 29 |

| Australia | 14 |

| Germany | 10 |

| China | 6 |

| France | 5 |

| Canada | 4 |

| Denmark | 4 |

| Global Consortium | 3 |

| Sweden | 3 |

| Israel | 2 |

| Italy | 2 |

| Austria | 1 |

| Belgium | 1 |

| Brazil | 1 |

| Egypt | 1 |

| Finland | 1 |

| Greece | 1 |

| Ireland | 1 |

| Mexico | 1 |

| No country data in affil; Spain; United States | 1 |

| Romania | 1 |

| Russia | 1 |

| South Africa | 1 |

| Sweden | 1 |

There Be Dragons

Outputs with single country (non international) author affiliation in Raw Dataset (where metadata was available)

| Single country author affiliation |

Output Count |

| United States | 59 |

| United Kingdom | 11 |

| China | 5 |

| Australia | 2 |

| Canada | 2 |

| Italy | 2 |

| Austria | 1 |

| Brazil | 1 |

| France | 1 |

| Germany | 1 |

| Greece | 1 |

Access Types in Raw Dataset (where metadata was available)

| Access type | Output Count |

| OA | 64 |

| Not OA | 61 |

| Free to read | 13 |

Access Types in Top 100 Outputs

| Access Type | Output Count |

| Not OA | 46 |

| OA | 41 |

| Free to read | 13 |

Some Insights

- No outputs in the Arts and Humanities proper included in the dataset- even those in the History & Archaeology subject category (7 outputs) were published in STEM venues.

- Springer Nature dominates the list even above Elsevier: is this because of Altmetric’s connection with the Nature Publishing Group? <– Stacy Konkiel from Altmetric responds: "Definitely not :) Our systems aren't preferential to NPG pubs/journals–they're agnostic. Why does NPG dominate? Hard to say!" (2018, Dec 12)

- The United States continues to dominate author country affiliations in both single author bylines and international multiple author bylines, followed at a distance by the UK.

- Brazil is the only South American country with First Author country affiliation in the raw dataset.

- South Africa is the only African country with an affiliation in the raw dataset.

- Stacy Konkiel from Altmetric is right to clarify that “the ‘countries’ aren’t just first author countries, they are for all authors associated with T100 papers (beyond the first 100, coverage is spottier, as we had less reason to enrich and check it manually)” and that “also worth looking into is the preponderance of papers in the T100 by a few of the same teams. I was genuinely surprised to see how similar they were, and given that, that they had enough attention to all make it into the T100.” (2018, Dec 12; thread)

- Not Open Access (including ‘Free to Read’, which is not Open Access) dominates the access type in both the top 100 and the complete raw dataset.

Future Work

A lot….

References

Engineering, Altmetric (2018). 2018 Altmetric Top 100 – dataset. figshare. Dataset. https://doi.org/10.6084/m9.figshare.7441304.v1

Konkiel, Stacy [skonkiel]. (2018, Dec 12). @ernestopriego “Springer Nature dominates the list…is this because of Altmetric’s connection with the Nature… https://t.co/jsA8P4yeez [Tweet]. Retrieved from https://twitter.com/skonkiel/status/1072944698807988225

Konkiel, Stacy [skonkiel]. (2018, Dec 12). @ernestopriego also worth noting is that the ‘countries’ aren’t just first author countries, they are for all autho… https://t.co/K5Z6pYhxjm [Tweet]. Retrieved from https://twitter.com/skonkiel/status/1072945115805704193

Konkiel, Stacy [skonkiel]. (2018, Dec 12). @ernestopriego Also worth looking into is the preponderance of papers in the T100 by a few of the same teams. I was… https://t.co/DvYNDkJRYz [Tweet]. Retrieved from https://twitter.com/skonkiel/status/1072946012388499457

One thought on “How ‘Alternative’ are Altmetrics? Online Attention to Scholarly Outputs and the Hegemony of the Global North”

Comments are closed.