I’m sharing summaries of Twitter numerical data from collecting the following bibliometrics event hashtags:

- #respbib18 (Responsible use of Bibliometrics in Practice, London, 30 January 2018) and

- #ResponsibleMetrics (The turning tide: A new culture of responsible metrics for research, London, 8 February 2018).

#respbib18 Summary

| Event title | Responsible use of Bibliometrics in Practice | |

| Date | 30-Jan-18 | |

| Times | 9:00 am – 4:30 pm | GMT |

| Sheet ID | RB | |

| Hashtag | #respbib18 | |

| Number of links | 128 | |

| Number of RTs | 100 | |

| Number of Tweets | 360 | |

| Unique tweets | 343 | |

| First Tweet in Archive | 23/01/2018 11:44 | GMT |

| Last Tweet in Archive | 01/02/2018 16:17 | GMT |

| In Reply Ids | 15 | |

| In Reply @s | 49 | |

| Unique usernames | 54 | |

| Unique users who used tag only once | 26 | <–for context of engagement |

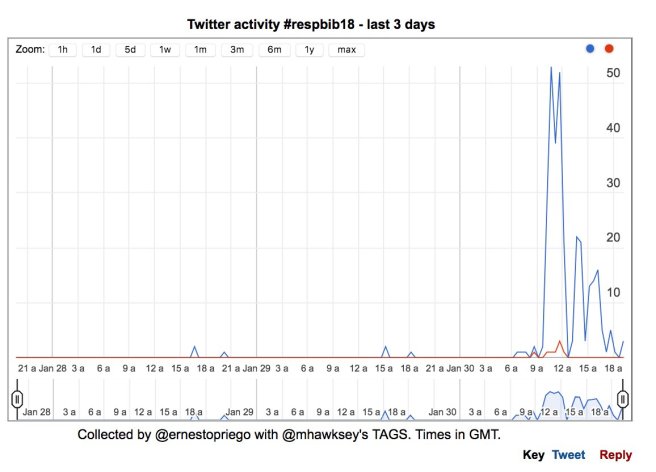

Twitter Activity

#ResponsibleMetrics Summary

| Event title | The turning tide: A new culture of responsible metrics for research | |

| Date | 08-Feb-18 | |

| Times | 09:30 – 16:00 | GMT |

| Sheet ID | RM | |

| Hashtag | #ResponsibleMetrics | |

| Number of links | 210 | |

| Number of RTs | 318 | |

| Number of Tweets | 796 | |

| Unique tweets | 795 | |

| First Tweet in Archive | 05/02/2018 09:31 | GMT |

| Last Tweet in Archive | 08/02/2018 16:25 | GMT |

| In Reply Ids | 43 | |

| In Reply @s | 76 | |

| Unique usernames | 163 | |

| Unique usernames who used tag only once | 109 | <–for context of engagement |

Twitter Activity

#respbib18: 30 Most Frequent Terms

| Term | RawFrequency |

| metrics | 141 |

| responsible | 89 |

| bibliometrics | 32 |

| event | 32 |

| data | 29 |

| snowball | 25 |

| need | 24 |

| use | 21 |

| policy | 18 |

| today | 18 |

| looking | 17 |

| people | 16 |

| rankings | 16 |

| research | 16 |

| providers | 15 |

| forum | 14 |

| forward | 14 |

| just | 14 |

| practice | 14 |

| used | 14 |

| community | 13 |

| different | 12 |

| metric | 12 |

| point | 12 |

| using | 12 |

| available | 11 |

| know | 11 |

| says | 11 |

| talks | 11 |

| bibliometric | 10 |

#ResponsibleMetrics: 30 Most Frequent Terms

| Term | RawFrequency |

| metrics | 51 |

| need | 36 |

| research | 29 |

| indicators | 25 |

| panel | 16 |

| responsible | 15 |

| best | 13 |

| different | 13 |

| good | 13 |

| use | 13 |

| index | 12 |

| lots | 12 |

| people | 12 |

| value | 12 |

| like | 11 |

| practice | 11 |

| context | 10 |

| linear | 10 |

| rankings | 10 |

| saying | 10 |

| used | 10 |

| way | 10 |

| bonkers | 9 |

| just | 9 |

| open | 9 |

| today | 9 |

| universities | 9 |

| coins | 8 |

| currency | 8 |

| data | 8 |

Methods

Twitter data mined with Tweepy. For robustness and quick charts a parallel collection was done with TAGS. Data was checked and deduplicated with OpenRefine. Text analysis performed with Voyant Tools. Text was anonymised through stoplists; two stoplists were applied (one to each dataset), including usernames and Twitter-specific terms (such as RT, t.co, HTTPS, etc.), including terms in hashtags. Event title keywords were not included in stoplists.

No sensitive, personal nor personally-identifiable data is contained in this data. Any usernames and names of individuals were removed at data refining stage and again from text analysis results if any remained.

Please note that both datasets span different number of days of activity, as indicated in the summary tables. Source data was refined but duplications might have remained, which would logically affect the resulting term raw frequencies, therefore numbers should be interpreted as indicative only and not as exact measurements. RTs count as Tweets and raw frequencies reflect the repetition of terms implicit in retweeting.

So?

As usual I share this hoping others might find interesting and draw their own conclusions.

A very general insight for me is that we need a wider group engaging with this discussions. At most we are talking about a group of approximately 50 individuals that actively engaged on Twitter on both events.

From the Activity charts it is noticeable that tweeting recedes at breakout times, possibly indicating that most tweeting activity is coming from within the room– when hashtags create wide engagement, activity is more constant and does not exactly reflect the timings of actual real-time activity in the room.

It seems to me that the production, requirement, use and interpretation of metrics for research assessment directly affects everyone in higher education, regardless of their position or role. The topic should not be obscure or limited to bibliometricians and RDM, Research and Enterprise or REF panel people.

Needless to say I do not think everyone ‘engaged’ with these events or topics is or should be actively using the hashtag on Twitter (i.e. we don’t know how many people followed on Twitter). An assumption here is that we cannot detect nor measure anything if there is not a signal– more folks elsewhere might be interested in these events but if they did not use the hashtag they were logically not detected here. That there is no signal measurable with the selected tools does not mean there is not a signal elsewhere, and I’d like this to be a comment on metrics for assessment as well.

In terms of frequent terms it remains apparent (as in other text analyses I have performed on academic Twitter hashtag archives) that frequently tweeted terms remain ‘neutral’ nouns, or adjectives if they are a keyword in the event’s title, subtitle or panel sessions (e.g. ‘responsible’). When a term like ‘snowball’ or ‘bonkers’ appears, it stands out. Due to the lack of more frequent modifiers, it remains hard to distant-read sentiment or critical stances, or even positions. Most frequent terms do come from RTs, not because of consensus in ‘original’ Tweets.

It seems that if we wanted to demonstrate the value added by live-tweeting or using an event’s hashtag remotely, quantifying (metricating?) the active users, tweets over time, days of activity and frequent words would not be the way to go for all events, particularly not for events with relatively low Twitter activity.

As we have seen, automated text analysis is more likely to reveal mostly-neutral keywords, rather than any divergence of opinion on or additions to the official discourse. We would have to look at those words less repeated, and perhaps to replies that did not use the hashtag, but this is not recommended as it would complicate things ethically: though it is generally accepted that RTs do not imply endorsement, less frequent terms in Tweets with the hashtag could single-out individuals, and if a hashtag was not included on a Tweet it should be interpreted the Tweet is not meant to be part of that public discussion/corpus.

You must be logged in to post a comment.