This is just a quick snippet to jot down some ideas as some kind of follow-up to my blog post on the Ethics of researching Twitter datasets republished today [28 May 2014] by the LSE Impact blog.

If you have ever tried to keep up with Twitter you will know how hard it is. Tweets are like butterflies– one can only really look at them for long if one pins them down out of their natural environment. The reason why we have access to Twitter in any form is because of Twitter’s API, which stands for “Application Programming Interface”. As Twitter explains it,

“An API is a defined way for a program to accomplish a task, usually by retrieving or modifying data. In Twitter’s case, we provide an API method for just about every feature you can see on our website. Programmers use the Twitter API to make applications, websites, widgets, and other projects that interact with Twitter. Programs talk to the Twitter API over HTTP, the same protocol that your browser uses to visit and interact with web pages.”

You might also know that free access to historic Twitter search results are limited to the last 7 days. This is due to several reasons, including the incredible amount of data that is requested from Twitter’s API, and –this is an educated guess– not disconnected from the fact that Twitter’s business model relies on its data being a commodity that can be resold for research. Twitter’s data is stored and managed by at least one well-known third-party, Gnip, one of their “certified data reseller partners”.

For the researcher interested in researching Twitter data, this means that harvesting needs to be done not only automatedly (needless to say storyfiying won’t cut it, even if your dataset is to be very small), but in real time.

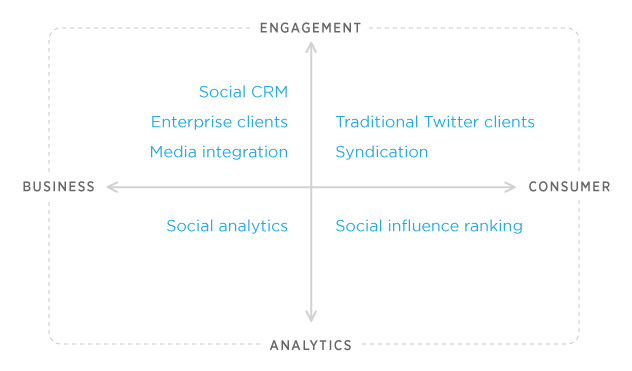

As Twitter grew, their ability to satisfy the requests from uncountable services changed. Around August 2012 they announced that their 1.0 version of their API would be switched off in March 2013. About a month later they announced the release of a new version of their API. This imposed new limitations and guidelines (what they call their “Developer Rules of the Road“). I am not a developer, so I won’t attempt to explain these changes like one. As a researcher, this basically means that there is no way to do proper research of Twitter data without understanding how it works at API level, and this means understanding the limitations and possibilities this imposes on researchers.

Taking how the Twitter API works into consideration, it is not surprising that González-Bailón et al (2012) should alert us that the Twitter Search API isn’t 100% reliable, as it “over-represents the more central users and does not offer an accurate picture of peripheral activity” (“Assessing the bias in communication networks sampled from twitter”, SSRN 2185134). What’s a researcher to do? The whole butterfly colony cannot be captured with the nets most of us have available.

In April, 2010, the Library of Congress and Twitter signed an agreement providing the Library the public an archive of tweets from 2006 through April, 2010. The Library and Twitter agreed that Twitter would provide all public tweets on an ongoing basis under the same terms. On 4 January 2013, the Library of Congress announced an update on their Twitter collection, publishing a white paper [PDF] that summarized the Library’s work collecting Twitter (we haven’t heard of any new updates yet). There they said that

“Archiving and preserving outlets such as Twitter will enable future researchers access to a fuller picture of today’s cultural norms, dialogue, trends and events to inform scholarship, the legislative process, new works of authorship, education and other purposes.”

To get an idea of the enormity of the project, the Library’s white paper says that

“On February 28, 2012, the Library received the 2006-2010 archive through Gnip in three compressed files totaling 2.3 terabytes. When uncompressed the files total 20 terabytes. The files contained approximately 21 billion tweets, each with more than 50 accompanying metadata fields, such as place and description.

As of December 1, 2012, the Library has received more than 150 billion additional tweets and corresponding metadata, for a total including the 2006-2010 archive of approximately 170 billion tweets totaling 133.2 terabytes for two compressed copies.”

To date, none of this data is yet publicly available to researchers. This is why many of us were very excited when on 5 February 2014 Twitter announced their call for “Twitter Data Grants” [closed on 15 March 2014]. This was/is a pilot programme [we haven’t heard anything about it yet either]. In the call, Twitter clarified that

“For this initial pilot, we’ll select a small number of proposals to receive free datasets. We can do this thanks to Gnip, one of our certified data reseller partners. They are working with us to give selected institutions free and easy access to Twitter datasets. In addition to the data, we will also be offering opportunities for the selected institutions to collaborate with Twitter engineers and researchers.”

As Martin Hawksey pointed out at the time,

“It’s worth stressing that Twitter’s initial pilot will be limited to a small number of proposals, but those who do get access will have the opportunity to “collaborate with Twitter engineers and researchers”. This isn’t the first time Twitter have opened data to researchers having made data available for a Jisc funded project to analyse the London Riot and while I expect Twitter end up with a handful of elite researchers/institutions hopefully the pilot will be extended.”

There are researchers out there who Most researchers out there are likely not to benefit from access to huge Twitter data dumps. We are working with relatively small data sets, limited by the methods we use to collect, archive and study the data (and by our own disciplinary frameworks, [lack of] funding and other limitations). We are trying to do the talk whilst doing the walk, and conduct research on Twitter and about Twitter.

There should be no question now about how valuable Twitter data can be for researchers of perhaps all disciplines. Given the difficulty to properly collect and analyse Twitter data as viewable from most Twitter Web and mobile clients (as most users get it) and the very limited short-span of search results, there is the danger of losing huge amounts of valuable historical material. As Jason Leavey (2013) says, “social media presents a growing body of evidence that can inform social and economic policy”, but

“A more sophisticated and overarching approach that uses social media data as a source of primary evidence requires tools that are not yet available. Making sense of social media data in a robust fashion will require a range of different skills and disciplines to come together. This is a process already taking shape in the research community, but it could be hastened by government.”

At the moment, unlimited access to the data has been the privilege of a few lucky individuals and elite institutions.

So, why collect and share Twitter data?

In my case, Martin Hawksey’s Twitter Archive Google Spreadsheet has provided a relatively-simple method to collect some tweets from academic Twitter backchannels (for an example, start with this post and this dataset). I have been steadily collecting them for qualitative and quantitative analysis and archival and historical reasons since at least 2010.

My interest is to also share this data with the participants of the studied networks, in order to encourage collaboration, interest, curiosity, wider dissemination, aswareness, reproducibility of my own findings and ideally further research. For the individual researcher there is a wealth of data out there that, within the limitations imposed by the Twitter API and the archival methods we have at our disposal, can be saved and made accessible before it disappears.

Figshare has been a brilliant addition to my Twitter data research workflow, enabling me to get a Digital Object Identifier for my uploaded outputs, and useful metrics that give me a smoke signal that I am not completely mad and alone in the world.

I believe that you should cite data in just the same way that you can cite other sources of information, such as articles and books. Sharing research data openly can have several benefits, not limited to

- providing public evidence of social media outputs (not static, subject to modification, deletion) as static records, for public verification, assessment

- enabling easy research reuse and reproducibility of research source data

- allowing the reach of data to be measured or tracked

- strengthening research networks and fostering exchange and collaboration.

Finally, some useful sources of information that have inspired me to share small data sets are:

- https://www.datacite.org

- http://www.dcc.ac.uk/digital-curation

- http://openuct.uct.ac.za/article/scap-outputs-changing-research-communication-practices

- http://opendatahandbook.org/

- http://figshare.com/blog/

- http://mashe.hawksey.info/

-

- http://thedata.org/

…and many others…

This coming academic year with my students at City, University of London I am looking forward to discussing and dealing practically with the challenges and opportunities of researching, collecting, curating, sharing and preserving data such as the kind we can obtain from Twitter.

If you’ve read this far you might be interested to know that James Baker (British Library) and me will lead a workshop at the dhAHRC event ‘Promoting Interdisciplinary Engagement in the Digital Humanities’ [PDF] at the University of Oxford on 13 June 2014.

This session will offer a space to consider the relationships between research in the arts and humanities and the use and reuse of research data.

Some thoughts on what research data is, the difference between available and useable data, mechanisms for sharing, and what types of sharing encourage reuse will open the session.

Through structured group work, the remainder of the session will encourage participants to reflect on their own research data, to consider what they would want to keep, to share with restrictions, or to share for unrestricted reuse, and the reasons for these choices.

Update: for some recent work with a small Twitter dataset,

You must be logged in to post a comment.